The rapid rise of Artificial Intelligence is undeniable. Around the world, governments are experimenting with machine learning models to design policy, deliver services, and even make diplomatic decisions once reserved for humans. The reach of the technology is breathtaking, but so are its risks. AI offers efficiency and insight, yet without accountability it can deepen inequality and erode democratic trust.

For someone who has spent decades working on governance and negotiation, the challenge feels familiar: powerful tools demand careful rules. The question is whether AI will strengthen democracy or automate it out of relevance.

AI is transforming public administration. Algorithms already assist officials in auditing contracts, detecting fraud, and improving service delivery. Properly designed, these systems help civil servants make faster and fairer decisions. In the United States, machine learning powered tools now scan millions of procurement documents to identify as anomy detections, and similar experiments are spreading through ministries across Europe, Asia, and Africa.

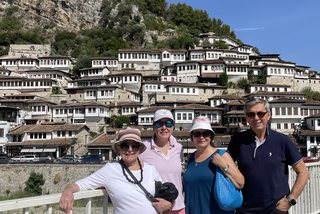

Albania offers one of the most striking examples. Its e-Albania portal delivers thousands of government services online, reducing paperwork and face-to-face bureaucracy. More recently, Diella, an AI-driven virtual assistant, was elevated to a symbolic “AI Minister,” advising on procurement and public administration. The move drew international attention and sparked debate at home: can an AI mandated system hold public office, and if so, who bears responsibility for its actions?

The ambition is clear, but as governments digitize, they also expose themselves to questions of legitimacy and oversight. Citizens may gain convenience while losing visibility into how decisions are made.

Artificial Intelligence is no longer confined to domestic governance; it is also reshaping diplomacy. Machine learning models now analyze vast amounts of data, from social media sentiment to trade flows, to predict crises or identify leverage points in negotiations. Advanced models are being tested to simulate negotiation strategies and multi-actor decision spaces. When layered onto classical diplomatic tools such as game theory and multi-criteria decision analysis, they can model hundreds of negotiation rounds, revealing potential compromises, shifts in leverage, or escalation risks long before humans reach the table. In diplomacy, this means AI is moving from reactive support to anticipatory analysis, helping negotiators understand not only where talks are heading but also how subtle changes in language or timing might reshape the outcome.

In training environments, the potential is immense also. Negotiators can test positions, rehearse responses, and analyze outcomes through interactive virtual scenarios. In my own work on mediation and strategic gaming, I have seen how AI can expand these exercises, turning a single scenario into a dynamic ecosystem that evolves with every decision.

There is, however, a catch. Large language models trained on global text data have shown erratic behavior when applied to real-world policy dilemmas, sometimes recommending aggression where caution is warranted or privileging powerful actors in ways that reflect historical bias. AI can expose a diplomat’s blind spots, but it cannot carry moral judgment. Negotiation still depends on empathy, culture, and restraint—qualities that no dataset can encode.

As AI spreads across the public sector, the need for strong governance frameworks becomes urgent. These frameworks define how algorithms are built, tested, and held accountable. Without them, technology can easily outpace both ethics and legal frameworks.

The European Union’s Artificial Intelligence Act, which entered into force in 2024, represents the first comprehensive global effort to regulate AI. It classifies systems by risk: unacceptable, high, limited, or minimal, and imposes strict transparency, oversight, and safety obligations on developers and users. High-risk systems must be explainable, auditable, and supervised by humans. The Act’s provisions will take effect gradually between 2025 and 2027, setting a standard that will influence not only the European Union but also the countries aspiring to join it.

For candidate countries such as Albania, aligning early with these norms could build trust, attract partnerships, and protect citizens’ rights. But compliance is not only a technical issue. It requires lawyers, ethicists, data scientists, and civil society watchdogs to work together and translate regulation into practice.

Albania’s digital experiments illustrate both innovation and vulnerability. The government might have the tools to digitize quickly, but it also needs the institutions to govern technology wisely. What may be missing is an independent, multidisciplinary body capable of evaluating AI deployments, auditing algorithms, and advising on legal and ethical implications before they turn into political crises. Such an institution could play several roles: assessment, education, translation, and trust-building. Without this kind of buffer, digital progress risks outpacing democratic control.

The promise of AI remains exciting. It can make governments faster, data smarter, and policies more responsive. Yet the technology also tests democracy’s core assumption that authority must remain human, visible, and accountable.

______

Ledio Cakaj is a former U.S. diplomat and UN investigator with more than 20 years of experience in governance, policy analysis, and negotiation. He uses AI to edit and translate his work when needed. For more on him: www.lediocakaj.com.